Not the whole story Boost frequency might not be thermally feasible for someapplication. const int size 5 16 dim3 blockSize(size, size) dim3 gridSize((numRows 1 size 2. Each cubin file targets a specific compute-capability version and is forward-compatible only with CUDA architectures of the same major version number.

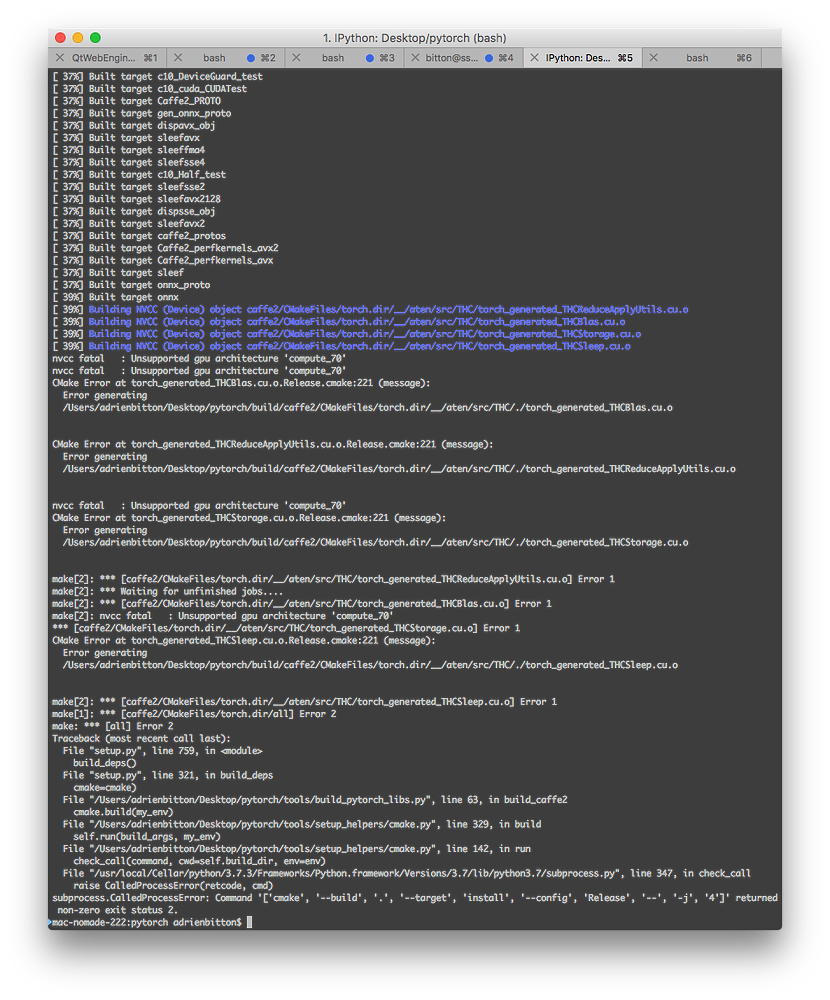

This is the output that I got for “nvcc -V” command.Ĭopyright (c) 2005-2020 NVIDIA CorporationĬuda compilation tools, release 11.2, V11.2.67īuild cuda_11.2.r11.2/compiler. The theoretically achieved FLOPS are calculated as follows (1.455 GHz)(80 SM)(64 CUDA cores)(2 fused multiply add) 14.9 TFLOPS (single precision)7.45 TFLOPS (double precision). Specifically, it is the use of GPU Computing and CUDA programming as a. The NVIDIA CUDA C compiler, nvcc, can be used to generate both architecture-specific cubin files and forward-compatible PTX versions of each kernel. Operating System See the Release Notes and EULA. By using CUDA, you can get the bottlenecking 5 of the code (which takes 90 of execution time). Please check your cudatoolkit version matches your CUDA version. High Performance Computing CUDA Toolkit CUDA Toolkit Archive CUDA Toolkit 8.0 - Feb 2017 Select Target Platform Click on the green buttons that describe your target platform. hardware (CUDA parallel compute architecture within GPU). To solve this error I need to set CC (CUDA Compute Capability) 5.0 in CMake. And having the error : CUDA Error: no kernel image is available for execution on the device while running darknet. Learn about Jetson for AI autonomous machines. I am using CUDA version 11040 windows 10 CMake version:3.21.3 and 2 GPU:NVIDIA Quadro M1200 and Intel HD graphics 630. An application can launch a coarse-grained kernel which in turn launches finer-grained kernels to do work where needed. masterxilo at 21:52 4 Your Vasili Volkov link is dead. Learn about RTX for professional visualization. CUDA 5.0 introduced Dynamic Parallelism, which makes it possible to launch kernels from threads running on the device threads can launch more threads. I noticed that cudaErrorInvalidValue is returned by cudaGetLastError after a kernel launch with too many blocks (looks like compute 2.0 cannot handle 1 billion blocks, compute 5.0 can) - so there are limits here too. Learn about Data center for technical and scientific computing. NvvmSupportError: No supported GPU compute capabilities found. Get started with CUDA and GPU Computing by joining our free-to-join NVIDIA Developer Program. Then I tried to run the same code in 11.2 and now I am getting an error as below. This code worked fine when I tried in CUDA 10.2 but I upgraded the CUDA version from 10.2 to 11.2 recently. Here is the code that I am executing in jupyterlab notebook.Īdd_gpu(d_a, d_out) I am testing some sample CUDA functions to analyse the GPU performance of jetson Xavier NX device.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed